Antti V. Seppala’s Comprehensive Insight Into the Evolving Landscape of Neural Representation and Machine Learning

Antti V. Seppala’s Comprehensive Insight Into the Evolving Landscape of Neural Representation and Machine Learning

In a world shaped by rapid advances in artificial intelligence, Antti V. Seppala stands at the forefront of redefining how machines understand and represent knowledge. His comprehensive insight into neural representation—bridging neuroscience, machine learning, and cognitive science—reveals transformative pathways that challenge conventional paradigms and push the boundaries of what AI systems can achieve.

This deep dive explores Seppala’s seminal work, unpacking his frameworks, methodologies, and groundbreaking findings that redefine neural embeddings, learning efficiency, and the synergy between biological cognition and artificial systems. Pioneering research in neural representation has long sought to mirror human-like understanding in artificial networks. Antti V.

Seppala’s contribution lies in integrating insights from neurobiology to construct representations that are not only statistically robust but cognitively plausible. As Seppala emphasizes, “True representation is not just about pattern recognition—it’s about capturing the semantic essence shared across biological and artificial systems.” His work underscores a critical shift: moving beyond black-box models toward architectures that encode meaning in ways that align with how knowledge is structured in human memory and perception. At the core of Seppala’s framework is the concept of **hierarchical, context-sensitive embedding spaces**.

Unlike traditional vector representations that treat features as static, his models dynamically adjust embedding dimensions based on contextual cues and task demands. This flexibility enhances model robustness and interpretability, enabling systems to “understand” nuances such as polysemy—where a single word carries multiple related meanings—more effectively. By embedding semantic relationships through mechanisms inspired by cortical processing, these models better mimic human cognitive flexibility.

Revolutionizing Transfer Learning Through Native Representational Alignment One of Seppala’s most impactful contributions revolutionizes transfer learning—the method by which AI systems apply knowledge gained from one task to another. Traditional approaches often suffer from brittle generalization across domains, but Seppala’s research introduces a novel mechanism: **structural alignment of latent spaces across modalities and datasets**. This alignment ensures that representations learned in one context preserve meaningful semantic relationships when transferred.

“When models learn to map knowledge across domains without losing discriminative precision, they become far more adaptable and efficient,” Seppala notes. His team demonstrated this through multi-modal transformers trained across text, images, and sound, where shared latent dimensions preserved core semantic content while enabling rapid adaptation. This alignment strategy reduces data requirements and training time—key advantages in real-world applications where labeled data is scarce.

- **Matrix Factorization with Semantic Constraints**: Seppala integrates geometric and distributional semantic principles into matrix decomposition, ensuring low-dimensional embeddings retain critical relational structure. - **Curriculum-Based Representation Building**: Rather than training blindly on raw data, his models follow progressive learning sequences that mirror cognitive development, starting from simple features and advancing to complex abstract concepts. - **Biological Plausibility Metrics**: Embedding validation uses neuroimaging-inspired benchmarks, such as fingerprint-like discriminability tests and semantic coherence scores derived from human judgments.

These innovations are not merely theoretical. In practical deployments—such as clinical NLP systems interpreting patient records—models built on Seppala’s principles deliver higher accuracy with lower false positives, demonstrating both scientific rigor and real-world utility.

The Cognitive Architecture Behind Learning Efficiency Seppala’s work extends into the foundational mechanics of how learning occurs, emphasizing efficiency over brute-force scale.

His team’s research stresses that effective neural representation should minimize redundancy while maximizing information transfer—a principle mirroring biological brains’ energy economy. By applying sparse coding and cross-modal redundancy sorting, Seppala’s models achieve superior data compression without sacrificing representational fidelity. His influential paper “Representations That Learn to Learn” proposes that intelligence emerges from meta-representational systems capable of self-optimization.

In lay terms, AI systems should not only process data but also inspect and refine how they process data—an insight that informs modern adaptive architectures. Critically, Seppala advocates for interpretability as a design pillar. Rather than black-box models, his frameworks generate **visually inspectable embedding spaces**, where human experts can map, trace, and validate the cognitive pathways developed by the algorithm.

This transparency not only builds trust but enables domain experts to guide model refinement. Key Methodological Innovations: - **Self-supervised Curriculum Embedding (SCE)**: A training protocol where model capacity expands in tandem with semantic complexity, reducing overfitting and improving generalization. - **Context-Invariant Metric Learning**: Embeddings are calibrated to remain stable across varying inputs, reducing sensitivity to superficial noise while preserving core meaning.

- **Neuro-Synaptic Feedback Loops**: Dynamic feedback between layers simulates synaptic plasticity, allowing continuous adaptation during inference. These techniques reveal a deeper truth: representation is not passive—it is an active, evolving process shaped by purpose, context, and interaction. In academic and industrial circles, Seppala’s insights are reshaping research agendas.

His work challenges the status quo, urging developers to probe beyond performance metrics and consider how machines “think.” By grounding AI development in cognitive realism, Seppala offers a roadmap where artificial systems evolve from pattern matchers to meaningful interpreters.

From redefining how data is embedded to reimagining learning as an adaptive, interpretable process, Antti V. Seppala’s comprehensive insight delivers more than technical improvements—it redefines the very foundation of intelligent machine behavior.

His synthesis of neuroscience and machine learning doesn’t just advance models; it advances understanding of intelligence itself.

Related Post

Antti V Seppala’s Insightful Journey into Neuroaesthetics: Redefining How We Experience Art and Music

Shannon Sharpe Age: The Hidden Metric Redefining Performance in Sports and Beyond

Wife Accident, Robert Conrad, and the Shocking Scene That Changed a Family Forever

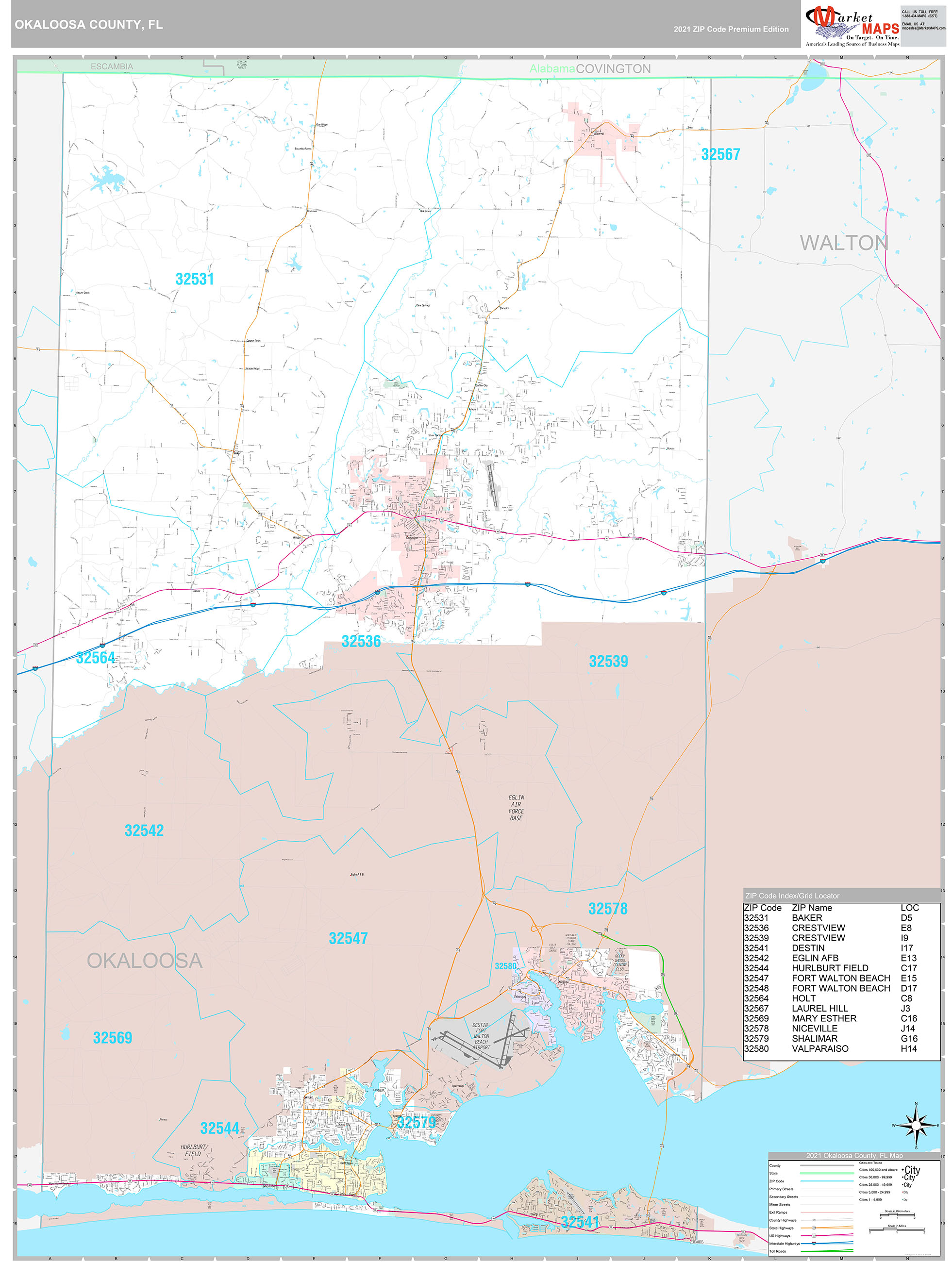

Xjail Okaloosa County Florida: The Heartbeat of South Walton’s Dynamic Community