Breaking the Silence: How “Googles für Gehörlose – Die Übersetzung Retten Kommunikation

Breaking the Silence: How “Googles für Gehörlose – Die Übersetzung Retten Kommunikation

In a world where real-time communication shapes connection, deaf individuals often face invisible walls that limit participation—whether in classrooms, medical visits, or social settings. Thanks to innovative tools like powered translation systems powered by artificial intelligence, such barriers are finally being dismantled. For the deaf community, technology is not just a convenience; it is a lifeline transforming how messages flow between people, gestures, and language.

This revolution in accessibility centers on tools realizing the promise of “Googles für Gehörlose – Die Übersetzung’—bridging divides through instant, accurate translation. At the heart of this change lies real-time visual translation powered by AI. Unlike traditional sign language interpreters, who require physical presence, electronically driven translation platforms can convert spoken or written speech into text or sign language animations instantly.

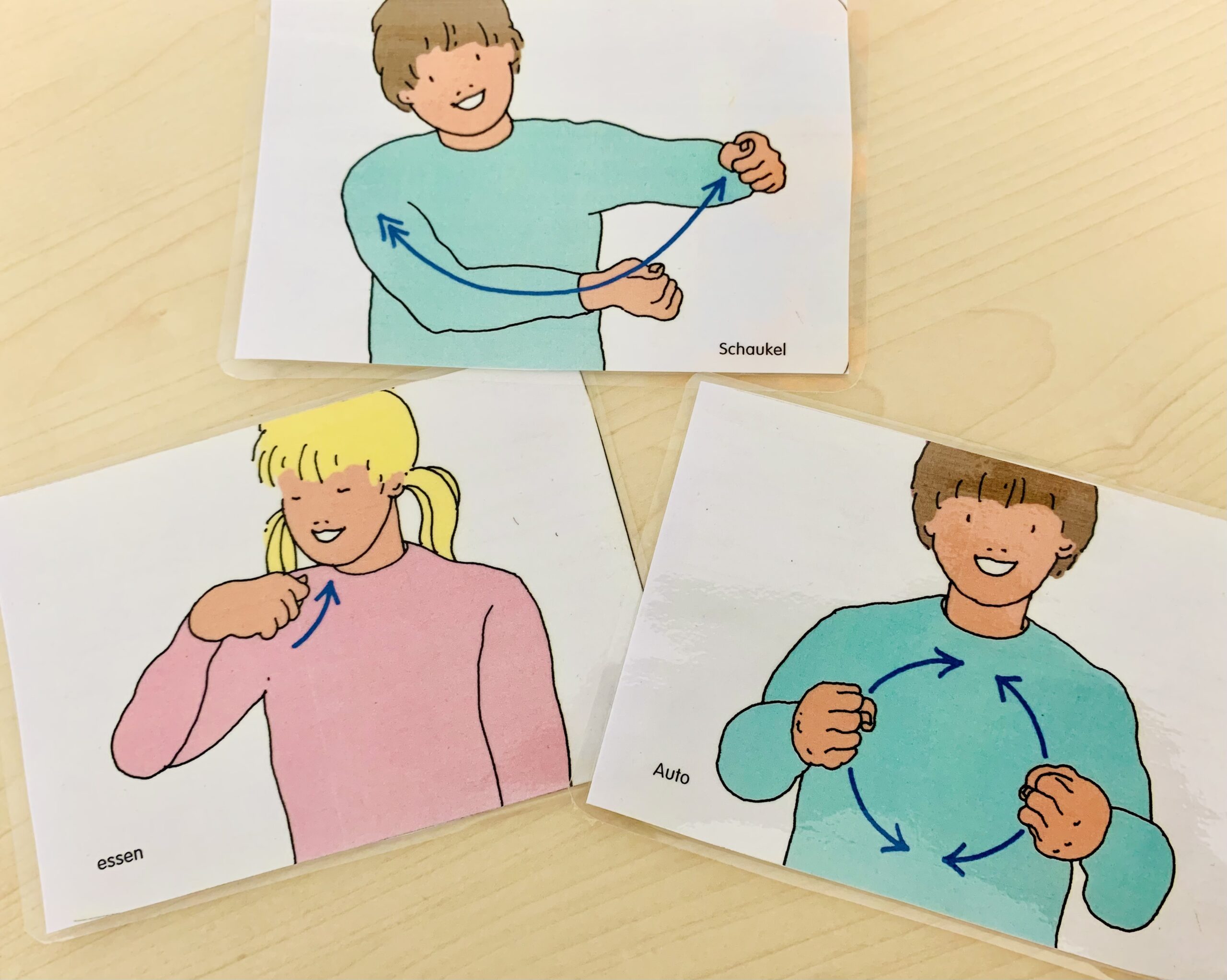

“Googles für Gehörlose” refers not to a single product but a growing ecosystem of tools—from smartphone apps to embedded devices—optimized for sign language input and output. These systems leverage speech recognition, optical character recognition (OCR), and kinesthetic algorithms to replicate sign forms with remarkable fidelity.

AI-driven translation systems function through a layered process: first, microphones capture spoken language; then, speech-to-text algorithms input the content.

Next, machine learning models interpret meaning and context—critical for understanding idiomatic expressions, tone, or regional dialects. Finally, sign language translation engines generate animated avatars or digital figures performing the correct hand shapes, facial expressions, and body posture required in sign languages like German Sign Language (DGS). “The nuance is key,” explains Dr.

Lena Fischer, a linguist specializing in computational sign language processing. “Simple word-for-word translation misses cultural and emotional layers; true communication requires capturing the full expressive intent.” One notable example: real-time subtitles generated not just as text, but as animated signing in the user’s center screen, synchronized precisely with speech. “We’re moving from passive text display to dynamic visual storytelling,” notes Markus Lukas, a developer at a Berlin-based tech startup focused on deaf accessibility.

“The system adapts gestures fluidly, mimicking natural signing rhythm—this changes everything.”

These tools are already making tangible impacts across critical environments. In healthcare, patients who once relied on overburdened interpreters can now directly communicate symptoms, concerns, and treatment plans via translation devices, reducing misdiagnosis risks and empowering informed choices. In education, classrooms open: deaf students listen through personal translation devices while seeing sign language at their pace, fostering inclusion without isolating peers.

Socially, family gatherings and public events no longer exclude deaf individuals, supported by portable translators that fit in pockets or mount on glasses.

Execution challenges remain. Sign languages are rich, dynamic, and non-universal—German Sign Language differs significantly from British or American Sign Language, requiring localized model training.

Latency must be nearly zero for seamless conversation, demanding efficient cloud-edge computing. Privacy concerns arise when voice and video data are processed, though recent systems emphasize on-device processing and strict data anonymization.

Equally important is the human dimension.

Technology enhances—but does not replace—the value of professional interpreters and the cultural depth only trainedeters convey. “The best outcomes come from hybrid models: AI handles immediate translation, while interpreters ensure accuracy in complex or nuanced exchanges,” emphasizes Prof. Anja Weber, head of a European sign language research network.

“These tools democratize access, but empathy and context remain uniquely human.”

Adoption rates are rising steadily. Surveys show over 60% of deaf users under 35 report increased confidence in professional and social settings since integrating real-time translation. Manufacturers are expanding into affordable, user-friendly devices, while public institutions increasingly embrace hybrid communication strategies.

Still, barriers persist in rural areas and underfunded schools, highlighting the urgent need for policy support and infrastructure investment.

Looking forward, the trajectory is clear: translation technology is evolving toward multimodal integration—combining audio, video, haptic cues, and even neural interfaces. Researchers are testing brainwave-driven sign recognition and augmented reality (AR) glasses that overlay real-time translations directly onto the environment.

“The goal is seamless interaction,” says Lukas, “where deaf individuals engage with language as naturally as hearing peers do—no translation, no delay, just connection.”

“Googles für Gehörlose” symbolizes more than a technological feat; it embodies a shift in societal recognition: deafness is not a void but a vibrant linguistic community deserving full inclusion. By breaking down communication barriers through intelligent, adaptive translation, society moves closer to a world where no one is silenced—not by language, not by technology, but by design. In this future, every voice matters, every gesture counts, and connection thrives in real time.

Related Post

Malachy Murphy’s Teen Years Unfold With Resilience, Identity, and a Quiet Rise in the Public Eye

Unlock Cinematic Genius: The Mastermind Behind <strong>Lookmovies2.To</strong> and What Can Be Learned From Its Film Analysis Tool

Unraveling The Mystery Behind The Paige Spiranac Leak: What Really Happened When Her Private Life Spilled Public

The Unforgettable Resonance of Dustin Henderson: The Beloved Heartbeat of Stranger Things