Decoding LM In Quantity: What It Really Means in Data and Decision-Making

Decoding LM In Quantity: What It Really Means in Data and Decision-Making

In an era defined by data overload, understanding the precise role of “LM in quantity” is essential for leveraging metrics meaningfully across science, business, and policy. Short for “Latent Measure in Quantity,” this concept captures unobserved but quantifiable influences that shape outcomes yet remain hidden beneath surface-level data. Far more than a statistical footnote, LM in quantity reveals how invisible variables—such as customer sentiment, systemic bias, or latent market trends—drive real-world patterns.

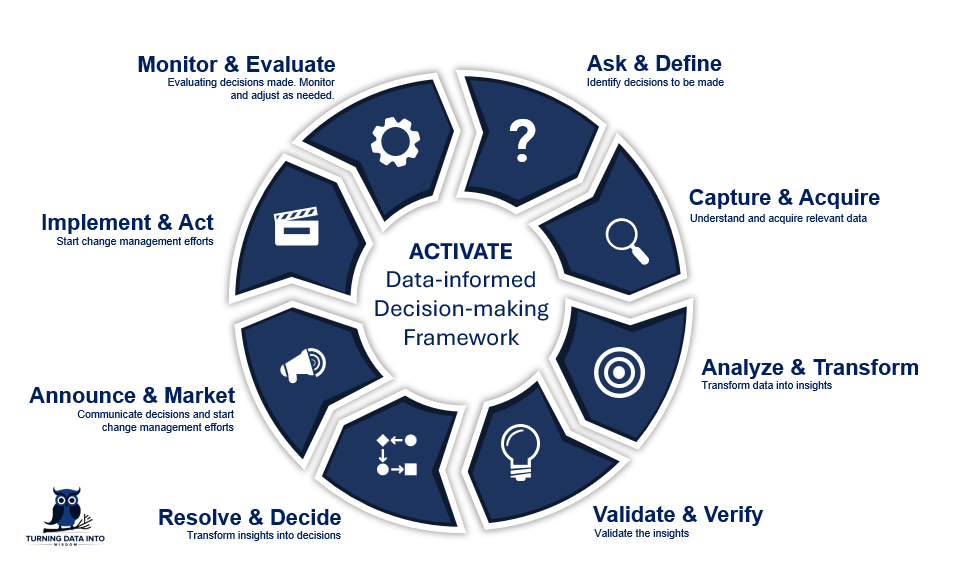

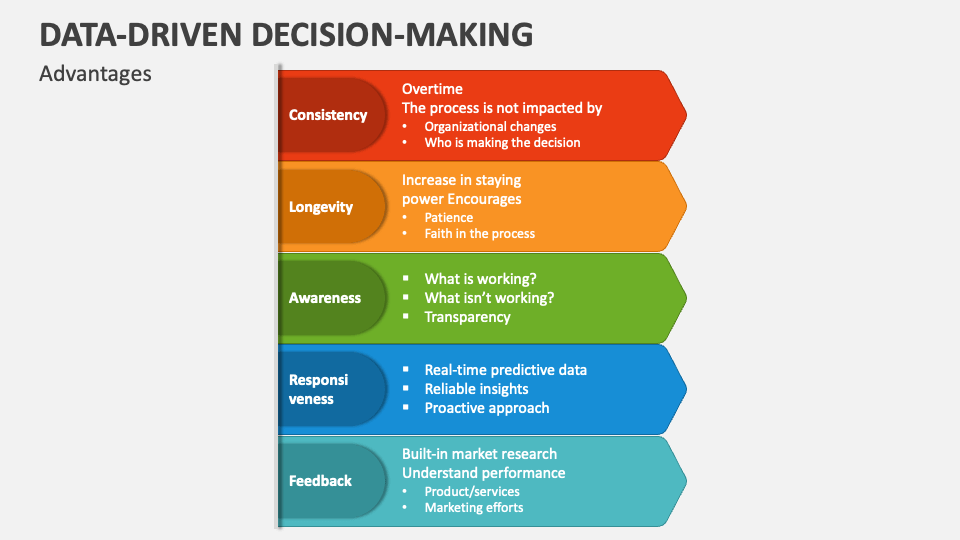

Success in interpreting this concept empowers professionals to build more accurate models, anticipate risks, and make decisions grounded in deeper insight rather than surface appearances. At its core, LM in quantity represents a bridge between observed metrics and unmeasured forces. Unlike standard variables directly measured—like revenue or age—latent measures infer meaning from indirect signals, using proxies and statistical inference to estimate what cannot be quantified outright.

Consider customer satisfaction: a score derived from reviews, feedback forms, and behavioral cues functions as an LM in quantity, shaping loyalty and conversion in ways direct sales data cannot fully explain.

This latent territory operates within the broader framework of statistical modeling and machine learning, where rigors of inference allow analysts to extrapolate from what is counted to what is felt, known, or implied. What makes LM in quantity compelling is its dual nature—it is both abstract and actionable.

By encoding subtle, non-measurable factors into quantitative frameworks, decision-makers gain visibility into the underlying drivers of complex systems. For example, in product development, LM in quantity might capture user anticipation or emotional engagement, translating qualitative reactions into leverageable data points. “It’s not just what people say,” explains Dr.

Elena Torres, a data scientist specializing in behavioral analytics. “It’s what their behavior and implicit feedback quietly reveal about latent needs and biases.”

Measurement of LM in quantity relies on a combination of qualitative proxies, econometric modeling, and advanced pattern recognition. Common methods include factor analysis, structural equation modeling, and natural language processing techniques that extract sentiment or intent from unstructured text.

These tools decode signals—such as customer service tone, social media mimicry, or employee survey sentiment—transforming them into quantifiable inputs. The key advance lies in validating these latent variables through cross-referenced data, ensuring robustness. As Dr.

Marcus Lin, a researcher in computational social science, notes: “LM in quantity is not magic—it’s a disciplined lens that uncovers patterns others miss.”

In practice, LM in quantity transforms how industries approach forecasting and strategy. In healthcare, patient-reported outcomes often serve as latent measures influencing treatment success beyond clinical readings. In finance, sentiment indicators derived from news and social media feed into risk models, revealing market shifts before traditional metrics.

Even urban planning benefits: anonymized mobility patterns and app usage act as proxies for latent congestion or demand, guiding infrastructure investment. “Quantifying the unquantifiable gives leaders foresight,” says Sarah Chen, director of analytics at a leading smart city initiative. “LM in quantity turns noise into signal.”

The concept also exposes critical challenges.

By design, latent measures are interpretive and model-dependent, raising questions about validity and bias. Misinterpreting LM in quantity can lead to flawed decisions—especially when proxy variables fail to represent true underlying dynamics. Transparency in methodology, rigorous validation, and interdisciplinary collaboration are therefore essential.

As the field evolves, incorporating domain expertise—psychology, sociology, or domain-specific knowledge—strengthens the credibility of inferred latent variables. “No algorithm replaces human judgment in discerning what matters,” cautions Dr. Lin.

“LM in quantity thrives when paired with context.”

Ultimately, understanding LM in quantity is not just a technical skill but a strategic necessity. It reflects a maturity in data interpretation—moving beyond what is easy to measure toward what drives real impact. As organizations increasingly rely on nuanced, real-time insights, mastering latent measurement becomes central to staying ahead.

It is the unseen thread weaving together raw data, human behavior, and predictive power. In the grand ecosystem of modern analytics, LM in quantity stands as a testament to the power of probing deeper—revealing that true understanding often lies not in what counts, but in what, though unseen, shapes what counts most.

Related Post

Nancy Barbato Young: Architect of Change in Education and Community Advocacy

Britt Coelho: Quantum Computing’s Rising Star and the Alaska Legislator Shaping Its Future

When Did Nina Dobrev & Ian Somerhalder Date: A Detailed Look at Their Romantic Journey

Unveiling the Age of Steve Martin’s Child: How the Legacy Shaped a Hidden Generation