Install Python Libraries in Azure Databricks: Your Essential Step-by-Step Guide

Accelerate your data-driven workflows by mastering the essential step of installing Python libraries in Azure Databricks — a critical bridge between powerful analytics and scalable cloud infrastructure. This guide delivers a clear, step-by-step pathway to deploy Lambda packages seamlessly within Databricks environments, empowering data engineers and scientists to extend analytical capabilities without compromise. From setting up credentials to managing library versions, the process combines precision with practicality to turn theory into immediate operational advantage.

Why Modular Libraries Matter in Azure Databricks

Modern data workflows depend on modular, reusable components to handle complex processing, model training, and real-time analytics.In Azure Databricks, Python libraries serve as the backbone for customized computations and machine learning pipelines. Unlike monolithic installations, modular library deployment enables granular control, version isolation, and efficient resource utilization across collaborative teams. As the Databricks ecosystem evolves, installing and managing these libraries becomes not just a technical task, but a strategic enabler of innovation.

The ability to import pandas, scikit-learn, xMatters, or even custom packages directly into notebooks ensures agility in transforming raw data into actionable insights.

Prerequisites: Preparing Your Azure Databricks Environment

Before diving into library installation, foundational setup is critical. Ensure you are authenticated and connected to your Azure Databricks workspace using the CLI orropolis UI. - **Active Cluster**: A cluster must be provisioned and running — either managed or self-provisioned — with sufficient compute capacity.- **Python Framework**: Databricks supports multiple Python runtimes; the `pyspark` and `ipykernel` packages provide essential integration. - **Permission Setup**: The user must hold `Role Management” or equivalent privileges to install packages via `!pip` commands or Databricks Visual Studio Code extensions. - **Version Control**: Maintaining package compatibility hinges on specifying exact versions — install with explicit `==1.2.3` to avoid unexpected runtime errors.

For optimal security, all installations should leverage managed Azure Storage or Databricks).

The Step-by-Step Installation Guide

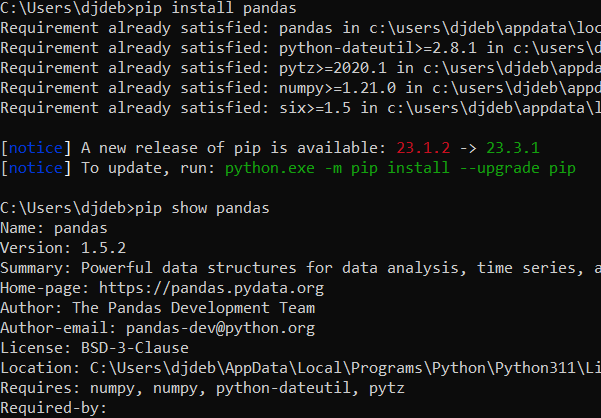

Installing Python libraries in Azure Databricks follows a streamlined process that leverages native command-line interfaces or interactive notebooks, balancing speed with traceability. Step 1: Verify Read Access to Databricks Notebooks Begin by launching a notebook Cell and confirming connectivity with `%pip show` to inspect installed packages, or use `!pip list` to verify environment readiness.This initial check ensures the runtime environment is primed for dynamic package loading. Step 2: Select the Target Notebook Session Each workflow runs in isolated notebook contexts. Pick a cell environment or notebook — note that per-cell scope restricts package persistence unless saved via `!pip install ...` followed by `!jupyter lsb install --file requirements.txt` (where applicable).

Step 3: Install Core Libraries Using the Standard CLI Use `!pip install` with pinned versions to lock dependencies. The following examples demonstrate essential imports: - **Pandas | scikit-learn | NumPy** — cornerstone for data wrangling and ML: ```python !pip install pandas==1.5.3 numpy==1.24.3 scikit-learn==1.3.0 ``` - **PySpark Integration** — Databricks’ native engine requires `pyspark` for distributed processing: ```python !pip install pyspark==3.5.2 ``` - **Specialized Tools (e.g., TensorFlow, XGBoost)** — recommended for model deployment: ```python !pip install tensorflow==2.13.0 xgboost==1.7.2 ``` Step 4: Automate Across Clusters To install libraries system-wide, manage installations via `requirements.txt` or Databricks’ package registry. A sample `requirements.txt` file supports version control: ``` pandas==1.5.3 numpy==1.24.3 scikit-learn==1.3.0 pyspark==3.5.2 tensorflow==2.13.0 ``` Run: ```python !pip install -r requirements.txt ```

Best Practices for Dependency Management

- **Pin All Versions**: Use exact version numbers (e.g., `==2.3.0`) to prevent compatibility issues across cluster reboots.- **Isolate Environments**: For large teams, adopt multi-cluster setups or Databricks’ workspace namespaces to segment project-specific packages. - **Audit Regularly**: Use `!pip freeze > dependencies.txt` to track installed libraries and verify integrity during onboarding or refactoring. - **Leverage Databricks Libraries Catalog** — explore official packages via the Databricks marketplace, which pre-integrate trusted modules with managed access.

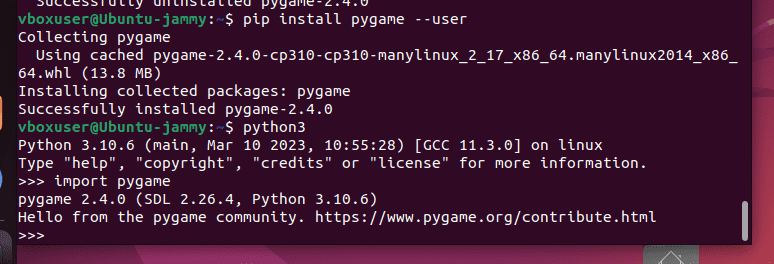

Common Challenges and Solutions Installing libraries in Databricks is generally seamless but can surface hurdles. - **Permission Errors** — ensure the user identity has `PROJECT_ADMIN` or `TeamMember` roles; use `!pip install ... --user` for dev-only access.

- **Library Conflicts** — isolate modules with virtual environments by installing via `!posh env install` (if using Posh) or custom `setup.py` scripts. - **Version Mismatches** — use `!pip list --outdated` to detect stale packages, then resolve via version pinning or safe upgrades.

Mastering Python library installation in Azure Databricks is more than a technical chore—it’s a gateway to maximum analytical flexibility, performance, and reliability.

By following structured, secure, and version-controlled deployments, teams unlock consistent execution across teams, faster experimentation cycles, and robust ML production workflows. The process, though straightforward, demands intentional setup and disciplined management to sustain long-term efficiency.

In sum, Install Python Libraries in Azure Databricks is a foundational skill that transforms static notebooks into dynamic, scalable data platforms—enabling organizations to wield Python’s full power within their cloud-native data ecosystems with confidence and precision.

Related Post

Aimee Osbourne 2024: From EU Breakup to Global Stardom—The New Powerhouse

Jeremy Steinke: Architect of Visionary Perception in a Hidden Realm of Science and Systems

Cheryl Rossum: Architecting Trust in Healthcare Through Strategic Leadership

Exploring The Current Life of Mike Ditka: Where Is The Legend Today?