Master List Crawling: Techniques, Tools, and Best Practices for Efficient Data Harvesting

Master List Crawling: Techniques, Tools, and Best Practices for Efficient Data Harvesting

In an era defined by data-driven decision-making, list crawling has emerged as a vital skill for businesses, marketers, researchers, and developers alike. Extracting structured information from websites—especially product catalogs, contact directories, research databases, and public registries—empowers organizations to build robust datasets, benchmark competitors, and fuel analytics. Understanding the nuances of list crawling techniques, leveraging the right tools, and implementing best practices is no longer optional; it’s essential for competitive advantage and operational efficiency.

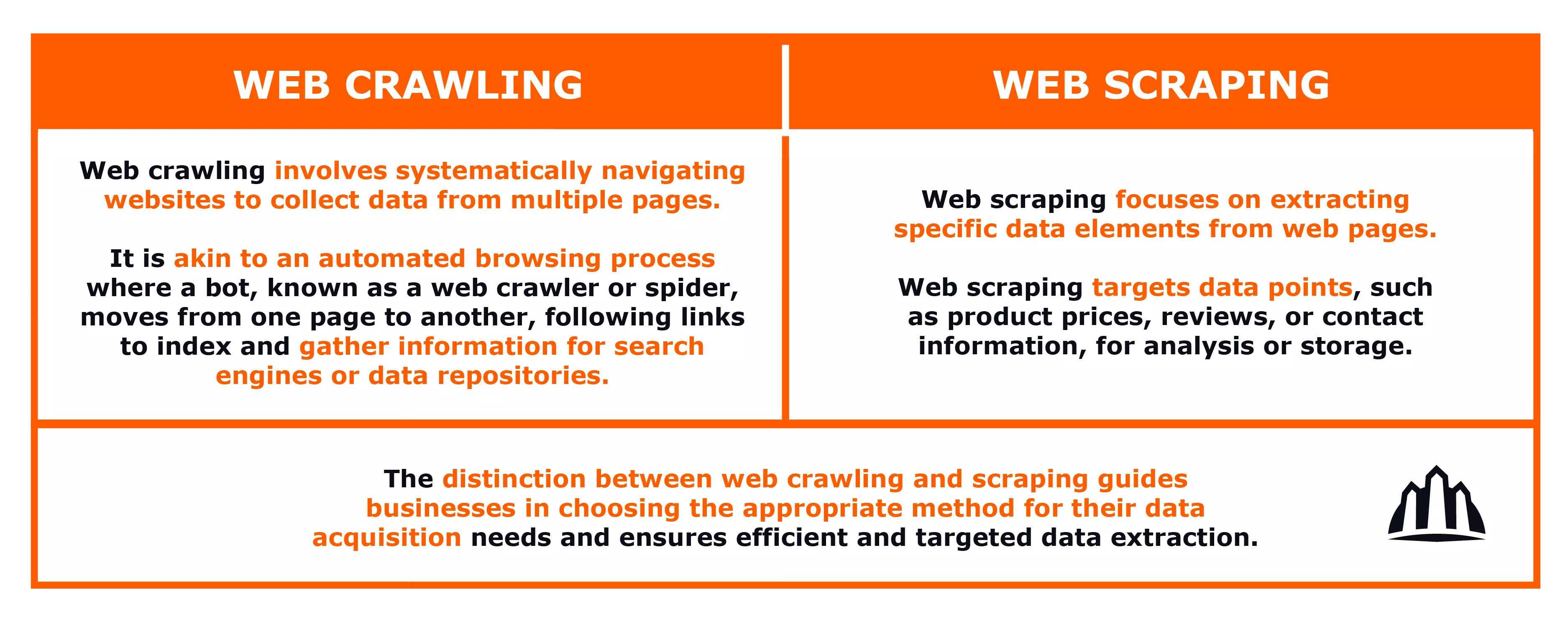

List crawling, also known as web crawling when applied broadly, involves automated bots systematically traversing websites to extract specific data points—often from employee lists, product inventories, or event registries. While seemingly straightforward, effective list harvesting demands strategic planning, technical precision, and awareness of ethical and legal boundaries. This article explores proven techniques, leading tools, and industry best practices that transform raw data extraction into actionable intelligence.

Core Techniques in List Crawling: Precision and Performance

Successful list crawling begins with understanding the available methodologies, each suited to different data sources and target structures.Structured vs. Unstructured Data Extraction

- **Structured data** resides in predictable formats—HTML tables, JSON APIs, or properly formatted XML—making extraction faster and more reliable. Tools optimized for tabular data parse row-and-column patterns with high accuracy.- **Unstructured data**, found in free-flowing content like web pages or PDFs, requires natural language processing (NLP) and pattern recognition. Techniques such as regex-based matching or semantic parsing help identify meaningful entries amidst noise. - Hybrid approaches combine both, first isolating structured sections, then applying contextual analysis to unstructured blocks.

This layered strategy maximizes completeness while minimizing false positives.

Direction-Following and Recursive Crawling

Crawlers must navigate websites intelligently: respecting navigation hierarchies ensures data relevance. A recursive crawl—starting from a homepage and descending into category pages—preserves logical context.For example, crawling an employee directory should begin at a primary "Staff" page, then systematically explore subpages by department, role, or location. Advanced crawlers track internal links and utilize sitemaps to avoid stale or redundant URLs, improving coverage and efficiency.

Handling Anti-Crawling Defenses

Modern websites deploy anti-bot measures—CAPTCHAs, IP throttling, user-agent detection—to limit automated scraping.Expert crawlers counter these challenges through techniques such as: - **Rotating proxies** to mimic diverse user geolocations and evade IP bans. - **Dynamic user-agent mimicry**, rotating headers to resemble legitimate browsers. - **Delayed request intervals**, reducing bot-like behavior patterns.

- **Session management**, preserving cookies and navigation state to appear human-like. As one industry analyst noted, “The key is not just speed, but stealth.” Without adaptive defenses, even well-designed crawlers risk being blocked before they gather meaningful data.

Top Tools Powering Modern List Crawling

A growing ecosystem of tools simplifies list harvesting, ranging from open-source libraries to managed SaaS platforms.Each offers distinct advantages based on project scale, complexity, and technical expertise needed.

Open-Source Frameworks: Flexibility Meets Expertise

- **Scrapy**: Python’s robust, production-ready framework supports custom spiders for structured crawling, built-in middleware for proxy rotation, and pipelines for data cleansing. Ideal for developers needing granular control over request logic and data flow.- **Apify and Parsium**: Both offer no-code or low-code interfaces combined with powerful headless browsers. Apify excels in scalable cloud crawling with built-in OCR for image-based data, while Parsium supports complex DOM manipulation for dynamic pages. - **BeautifulSoup + Requests**: A lightweight duo perfect for beginner projects.

These tools parse static HTML efficiently and integrate seamlessly with Python scripts for small-scale extraction tasks.

Cloud-Based and SaaS Platforms: Speed and Scalability at Scale

- **Apify Console**: A browser-based editor where users build, test, and deploy crawlers using visual flowcharts and integrated API keys. It supports distributed execution across multiple environments for high-volume harvesting.- **Bright Data (formerly Lunalift)**: Offers SSL proxy networks, anti-detection capabilities, and AI-powered match rates, enabling reliable data extraction from complex, JavaScript-heavy sites like e-commerce platforms. - **Import.io**: Combines visual scraping with AI to identify data patterns autonomously, reducing manual coding for users with limited programming experience.

Managed Crawling Services: Outsourcing Expertise

Enterprises lacking in-house capabilities increasingly rely on third-party crawl-as-a-service providers.These platforms handle infrastructure, maintenance, legal compliance, and anti-bot evasion, delivering ready-to-use datasets without heavy upfront investment. This trend reflects a shift toward operational efficiency—allowing teams to focus on analysis rather than technical overhead.

Best Practices: Building a Reliable and Ethical Crawling Workflow

Deploying best practices ensures not only accuracy and completeness but also compliance and scalability.Respect Robots.txt and Terms of Service

Every website publishes aRate Limiting and Stealthy Execution

Overloading servers risks blocking access or triggering defensive mechanisms. Implementing polite request pacing—typically 1–2 seconds per page—maintains goodwill with target sites. Techniques like randomized delays and sequential URL processing mimic organic user behavior while maximizing throughput.Data Validation and Quality Assurance

Extracted data often contains inconsistencies—misspelled names, missing fields, format mismatches. Implementing validation layers—four-level checks for accuracy, completeness, and consistency—ensures datasets meet downstream analytics or integration needs. Tools like JSON Schema or custom regex patterns enable real-time schema enforcement during ingestion.Automated Monitoring and Logging

Robust white-and-black logging tracks crawl success, errors, anomalies, and performance metrics. Automated alerts notify teams of dropped pages, failed requests, or sudden drops in extraction rates. This transparency enables rapid troubleshooting and continuous improvement.Scalability and Distributed Architecture

As target datasets grow, single-node crawlers reach performance ceilings. Designing distributed crawling systems—using message queues, parallel workers, and horizontally scalable infrastructure—ensures reliability during high-volume harvesting, whether collecting millions of product SKUs or global employee records.Real-World Applications and Strategic Insights

List crawling serves diverse sectors with tailored use cases.In recruitment, automated harvesting populates talent databases from company websites and job boards, accelerating hiring pipelines. In competitive intelligence, monitoring rival product pages reveals pricing shifts, promotional strategies, and feature launches. Academic researchers use list crawling to compile datasets for social studies, public health tracking, and market trend analysis.

Even real estate platforms leverage crawling to aggregate listing data across multiple portals, enabling comprehensive market dashboards. Successful implementations emphasize alignment with business goals. A retail analytics firm, for instance, implemented a PyScrapy crawler with proxy rotation and Debezium-style event streaming, reducing data latency from days to minutes while maintaining 98% accuracy across 50+ global domains.

Another example: a nonprofit used Apify’s visual crawler to extract donor lists from charity websites—automating outreach and improving response rates by 40%.

Navigating Challenges: Ethics, Technical Limits, and evolving Web Standards

Despite its power, list crawling is fraught with challenges. Dynamic content rendered by JavaScript, CAPTCHA barriers, and site reprioritization via HTML fragility demand constant adaptation.Equally critical are ethical considerations: scraping personal data without consent violates privacy laws like GDPR and CCPA. Proactive compliance—data anonymization, consent management, and transparent opt-out—builds trust and mitigates risk. Legal uncertainty remains a concern, as jurisdictions interpret “crawling right” differently.

Leading organizations embed legal reviews into development cycles and maintain audit trails, ensuring defensibility even as regulations evolve.

Future Trends: AI, Real-Time Crawling, and Autonomous Intelligence

The next frontier in list crawling involves AI-driven adaptability. Machine learning models predict optimal crawl paths, prioritize high-value pages, and detect schema shifts autonomously.Real-time streaming crawlers, fed by event-driven architectures, deliver live data for instantaneous decision-making. As one technologist predicts, “We’re moving beyond static extraction—crawlers of the future will learn, reason, and evolve alongside the web itself.” This evolution promises unparalleled efficiency but demands rigorous ethical guardrails to preserve data integrity and fairness.

List crawling, when grounded in precise techniques, reliable tools, and disciplined best practices, transforms raw web data into strategic assets.

From structured tables to dynamic interfaces, the modern crawler—guided by intelligence, constrained by ethics—unlocks insights that fuel innovation across industries. As the digital landscape expands, mastery of list harvesting is no longer a niche skill but a core capability for organizations determined to lead in data-driven markets.

Related Post

Unlocking Web Data: Mastering List Crawling with Advanced Techniques, Tools, and Proven Best Practices

The Rising Star: Brett Climo’s Powerful Leap in Screen Performance

Is Chuck Norris Still Alive? The Enduring Legend Behind the Myth

Unraveling the Mystery: What Does WTV Mean in American Television?