Round Robin Scheduling: A Deep Dive into Fairness, Precision, and Real-Time Performance

Round Robin Scheduling: A Deep Dive into Fairness, Precision, and Real-Time Performance

In the relentless rhythm of computing systems, where every millisecond counts and equitable resource distribution is non-negotiable, Round Robin Scheduling stands as a cornerstone algorithm—trusted, simple, and remarkably effective in managing competing processes. Unlike static or priority-based approaches, Round Robin (RR) ensures every task receives a turn, eliminating starvation with a grace born from fairness and predictability. This deep dive uncovers how Round Robin works, its historical roots, its balance of efficiency and responsiveness, and the nuanced decisions behind its widespread adoption across operating systems, embedded devices, and cloud environments.

Origins and Core Philosophy: From Time-Sharing to Modern Computing

Born in the late 1950s during the dawn of time-sharing systems, Round Robin scheduling was designed to address a fundamental problem: how to share a single processor among multiple users or processes without one dominating the others. The algorithm’s elegance lies in its simplicity—each process is assigned a fixed time quantum, executed in a cyclical manner. When a quantum expires, the process is placed at the end of the queue, and the next eligible task runs.This fixed-interval approach ensures responsive behavior even under bursty workloads. > “Round Robin captures the essence of fairness in scheduling—give everyone a moment, then move on,” explains Dr. Elena Marlow, a systems architect specializing in real-time systems.

“It’s not merely about fairness; it’s about creating a deterministic execution model essential for predictable system behavior.” This time-sharing foundation transformed computing from mainframe-only environments to multi-user platforms, enabling interactive sessions with minimal lag. As computing evolved, RR’s core principles adapted seamlessly to dynamic, high-throughput contexts—from early minicomputers to modern edge devices and cloud orchestration platforms.

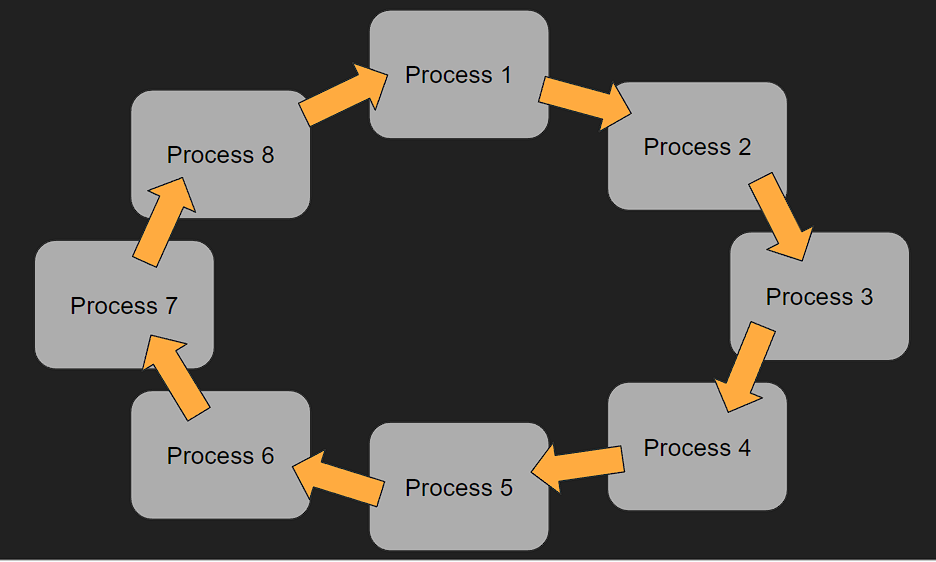

Mechanics of Round Robin: Simplicity with Cycle Structure

At its core, Round Robin operates through three key components: a fixed time quantum, a queue to manage process order, and a round-robin execution cycle.Each process is allocated a time slice squared off in milliseconds or microseconds, depending on system design. Once a process runs for its quantum, it is paused and moved to the end of the queue, allowing the next task to execute. This cycling mechanism ensures: - Every task gains regular access to the CPU.

- No process starves indefinitely. - Latency remains bounded, a critical factor for interactive applications. The time quantum is a pivotal parameter—small enough to maintain responsiveness, large enough to minimize context-switching overhead.

Too short a quantum causes excessive overhead; too long risks delayed fairness. Optimal tuning often depends on workload characteristics and system architecture.

Advantages: Responsiveness, Fairness, and Predictability

Round Robin’s appeal stems from its balanced combination of technical strengths.Its fixed time slices guarantee predictable response times—a "contained luxury" in unpredictable processing environments. This makes RR ideal for interactive systems like browsers, game engines, and real-time dashboards where user experience depends on immediate feedback. Moreover, the algorithm’s inherent fairness eliminates the risk of task starvation.

Everyone gets a guaranteed slice, regardless of priority. For systems with diverse process types—latency-sensitive threads, background workers, and interactive sessions—RR ensures no single task can monopolize resources. Ericsson’s mobile switching systems, for example, rely on RR to maintain consistent call quality under high concurrency, avoiding jitter and stutter that degrade user experience.

Equally important is its simplicity. RR’s logic is straightforward to implement, audit, and optimize—critical in embedded systems where code size and reliability are paramount. Multi-core and distributed environments further benefit from RR’s stateless, queue-driven design, which scales naturally without complex state management.

Real-World Applications: From OS Kernels to Cloud Orchestration

In operating systems, Round Robin anchors process scheduling in Linux, Windows, and real-time environments. In Linux’s Completely Fair Scheduler (CFS), a modern evolution borrowing from RR principles, the kernel dynamically adjusts quantum sizes based on process behavior, blending fairness with adaptability. This hybrid approach maintains responsiveness across home PCs, servers, and embedded IoT devices.The CPUs in network routers, especially in edge computing nodes, use RR to handle concurrent packet processing with minimal latency—ensuring data flows without delay. In virtualized environments, hypervisors apply RR-like scheduling to guest VMs, guaranteeing equitable bandwidth and CPU time across isolated tenants. Cloud platforms extend these concepts into container orchestration.

Kubernetes, while primarily callback-driven, leverages time-sliced process execution in its scheduler logic, echoing RR’s fairness ethos to maintain/apply workload quality under variable demand. This ensures no container starves during peak loads, supporting high availability and consistent service levels. “Round Robin’s quiet consistency makes it indispensable,” notes Dr.

Rajiv Patel, a senior research scientist in distributed systems. “It’s not flashy, but it’s the silent guardrail ensuring all tasks contribute fairly to the system’s heartbeat.”

Trade-offs and Limitations: The Cost of Fairness Despite its strengths, Round Robin introduces trade-offs that demand careful consideration. Fixed quanta cap latency: while predictable, aggressive quantum settings increase context-switching overhead, eroding efficiency.

Too small a quantum may turn the scheduler into a context-switching bottleneck. Another limitation is the algorithm’s inherent latency ceiling—response time cannot fall below the quantum length. This makes RR less suitable for ultra-low-latency applications like high-frequency trading or medical monitoring, where even microsecond delays matter.

Scalability also presents challenges in massive-scale distributed systems. While RR works well locally, maintaining global fairness across thousands of nodes requires hierarchical or hybrid approaches, adding complexity. Moreover, dynamic workloads—such as bursty IoT sensor data—can strain RR without adaptive quantum tuning, pushing implementations toward more sophisticated dispatchers.

nevertheless, exactly when fairness and predictability are non-negotiable, Round Robin’s proven model remains unmatched in practical deployment.

Optimization Strategies: Tuning Quantum and Queue Logic

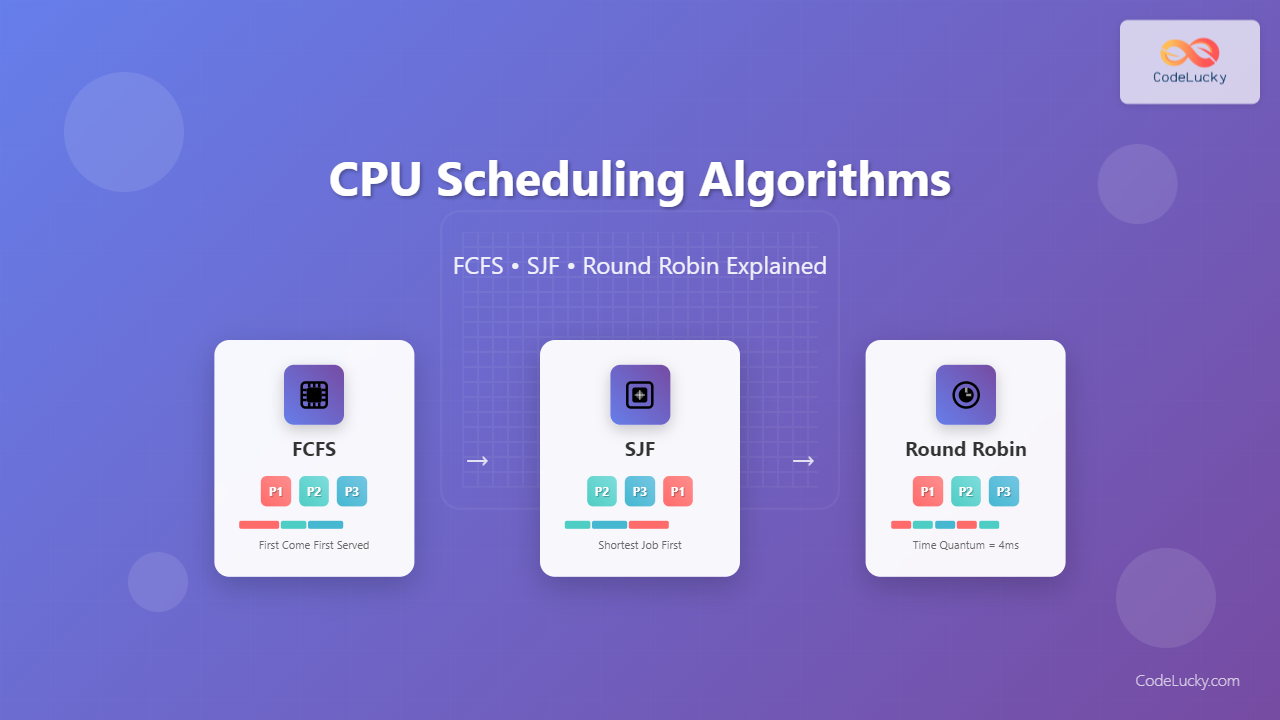

Successful Round Robin implementation hinges on intelligent parameter tuning. Selecting the right quantum size balances responsiveness with overhead.Empirical studies suggest a quantum between 10–100 milliseconds often suits server workloads, while interactive apps benefit from shorter cycles closer to 10–20 ms. Queue organization shapes performance too. First-Come, First-Served (FCFS) ensures fairness but risks delaying urgent tasks.

Priority-enhanced RR variants allow preemption or weighted quantum allocation, integrating urgency without sacrificing equity. Modern systems often layer RR with other scheduling policies. For instance, Linux implements CFS alongside RR elements, using feedback from process behavior to adjust quantum dynamically.

Such hybrid models combine RR’s simplicity with adaptive intelligence, maximizing efficiency across diverse workloads. Adaptive quanta, which scale with CPU load or process behavior, represent an emerging frontier—enabling RR to remain agile without manual tuning. These innovations extend Round Robin’s relevance into AI-driven infrastructures and real-time edge compute where resource availability fluctuates.

The Future of Round Robin: Evolving with Computing Paradigms

As computing embraces multi-core architectures, containerization, and AI, Round Robin continues to adapt. In edge devices handling massive concurrent sensor inputs, the algorithm ensures every data stream gets processor access, preventing lag in automated systems. In cloud-native environments, RR-friendly schedulers support thousands of containerized tasks with bounded latency, reinforcing reliability and scalability.The rise of real-time micro-services and latency-sensitive microsystems further elevates RR’s role. By guaranteeing that each service thread or container executes within predictable time bounds, Round Robin becomes a vital thread in the resilience mosaic of modern distributed applications. Emerging research explores integrating RR with machine learning—predicting workload patterns to optimize quantum selection and queue order dynamically.

Such innovations promise to unlock new levels of responsiveness without sacrificing fairness.

Round Robin Scheduling endures not by accident, but through deliberate design—fairness, simplicity, and predictability—and its ability to evolve with the ever-changing demands of computing. From mainframes to edge nodes, it remains the silent architect of equitable resource access, proving that the best scheduling is not the flashiest, but the most reliable.

Related Post

Next.js Tutorial In Hindi: Build Your First App – Step-by-Step in Simple Words

Mastering Your Progress: The Essential Guide to Saving in Construction Simulator 2

Twiford’s Legacy in Grief and Remembrance: The Soul of Personalized Funeral Home Obituary Services

Cara From Love Island: The Unwavering Resilience of a Tok Михай BLIP